Management of natural history collections

Challenge

Over the past decades, natural history collections have expanded and accelerated their digitization efforts. Many museums have carried out large digitization projects, photographing specimens and registering them in their databases. These are subsequently made available by means of open access APIs. This way, researchers and others can gain access to specimen data that was previously only available through physical access to a collection. Obviously, an important part of that data is the name of the plant, animal, fungus or stone that makes up the specimen.

"A growing number of specimens have been digitized but not identified"

Taxonomy, the science of naming and organizing biological organisms, is an important discipline, as correct species identification is crucial for understanding biodiversity. In recent years, natural history collections have been forced to come to terms with something called the ‘taxonomic impediment’. This, in short, is the combination of a shortage of taxonomic experts and dwindling funds, which has led to a steady loss of available taxonomic know-how.

The juxtaposition of increased digitization with the decline in the number of taxonomists has led to a growing number of natural history specimens that have been digitized but not taxonomically identified. Often, specimens have been classified in bulk on a higher taxonomic level - family, for instance - but these generalizations are not sufficient for in-depth research, which generally requires identification on the level of species or subspecies.

The challenge was to see if we could apply deep-learning methods to the problem of unidentified specimens in our digital collection. Our two main questions were:

Is it technically feasible to create deep-learning models for image recognition which can be used effectively for the automatic identification of digitized specimens?

Is it possible to implement such models in the digitization workflow in a way that enhances the result, while minimizing extra work?

Solution

The Project

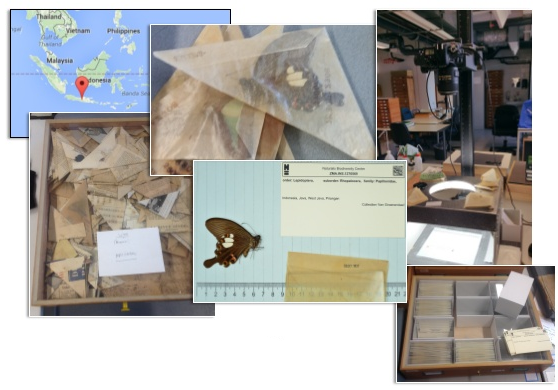

For the pilot project, we worked together with collection managers from the entomology department of Naturalis Biodiversity Center. A part of the entomology collection is formed by the Van Groenendael-Krijger collection, comprising over 200,000 butterfly specimens, collected in Indonesia in the middle of the previous century. So far, 50,000 have been digitized (photographed and logged with basic information in the collection database) and around 7,500 have been identified. Digitization is an ongoing process, that is carried out by volunteers who manually photograph, register, and repack the butterflies. An expert periodically identifies the specimens based on the photographs.

Digitization Workflow

For each specimen, a volunteer creates a database record, photographs the butterfly, and then re-packs and stores it. Periodically, the photographs are sent to a taxonomist, who identifies the specimens from the picture. Once all specimens have been identified, the information is returned, and the identifications are added to the database.

In the enhanced workflow, volunteers let the deep learning-software identify the specimen after they have taken a photo. The automatic identification is added to the data forwarded to the taxonomist. The model’s certainty is included with each identification and, ideally, the taxonomist only samples or ultimately skips identification with a high certainty, thus allowing more time for more difficult and interesting cases.

Making The Model

Using the photographs of the 7,500 identified specimens from the Papilionidae family (Swallowtails), divided into 92 classes, we created a convolutional neural network for identifying further specimens. 85 of the classes were based on species' or subspecies’ names, and in some cases the combination of the (sub)species’ name and their sex. Initial model versions used only the names as classifiers, but tests showed that for some species the visual differences between the different sexes were so distinct, that model accuracy greatly increased when the specimen’s sex was taken into account. The remaining seven classes consisted of butterflies from other, similar families, identified only by the family’s names.

Using the photographs of the 7,500 identified specimens from the Papilionidae family (Swallowtails), divided into 92 classes, we created a convolutional neural network for identifying further specimens. 85 of the classes were based on species' or subspecies’ names, and in some cases the combination of the (sub)species’ name and their sex. Initial model versions used only the names as classifiers, but tests showed that for some species the visual differences between the different sexes were so distinct, that model accuracy greatly increased when the specimen’s sex was taken into account. The remaining seven classes consisted of butterflies from other, similar families, identified only by the family’s names.

There was some variation in the number of identified specimens that were available per (sub)species. In some extreme cases, there were only two images available, the bare minimum for model purposes (one for training, one for validation). This class imbalance was not explicitly addressed in the model.

The Pilot

For the pilot, we took 400 undigitized, unlabeled specimens assumed to be from the Papilionidae family. For easy integration in the existing workflow, we implemented the recognition model as an API with a simple GUI on top of it. The GUI consisted of a locally available web page, which allowed volunteers to drag & drop newly made photos onto it, triggering the automatic identification process. They were presented with the results, which included just one candidate if its certainty was 95% or higher, or the top three candidates when it was not. Volunteers selected the appropriate identification but were required to add a note if they selected another option than the topmost candidate.

Next, the taxonomic expert independently identified the 400 specimens, without access to the automatic identifications, so the results generated by the model could be validated.

Results

Initial results showed an accuracy of 60% for the model, meaning that in 60% of the cases, identification by the model matched that of the expert, who’s opinion was considered to be correct in all cases.

Further analysis revealed that 105 of the 400 newly digitized specimens were of species that were not part of the original training set, thus illustrating the effects of the so-called "open world" problem. Ignoring these specimen, the analysis revealed an 86% accuracy for the closed world dataset (i.e. specimens of species that were present in the training set).

Some additional findings:

Five out of thirteen species with just two specimens in the dataset had a recognition rate of 100%, which puts into perspective the notion that training deep-learning models always require “big” data.

Volunteers deviated from the #1 candidate in 52 out of the 400 cases. Of those, 14 were correct choices, 28 concerned cases not present in the model, and 10 were incorrect. Of these incorrect cases, eight were changed to the wrong sex (of the right species), one was changed to a incorrect subspecies and just one to an incorrect species. Volunteers never changed a correct #1 candidate.

Conclusions

The pilot results have lead us to conclude that it is feasible to use deep-learning models as an identification tool helping to reduce the workload of taxonomic experts. Once model accuracy is deemed high enough, and taxonomic experts have vetted the model for those species, it should be possible to introduce fully automated identifications in the workflow for common, relatively easy recognizable species. As these “easy” species are likely to form the bulk of a collection, the potential gains are significant.

The pilot results have lead us to conclude that it is feasible to use deep-learning models as an identification tool helping to reduce the workload of taxonomic experts. Once model accuracy is deemed high enough, and taxonomic experts have vetted the model for those species, it should be possible to introduce fully automated identifications in the workflow for common, relatively easy recognizable species. As these “easy” species are likely to form the bulk of a collection, the potential gains are significant.

With regards to the second question, we think the pilot has successfully demonstrated that it is possible to implement deep-learning technology in a digitization workflow in a useful and effective way. The new setup required minimal extra effort from the volunteers, was easily explained, and performed stably and adequately. As it turned out, many of the volunteers felt positively engaged by the direct feedback from the identification process, and the possibility of commenting on the presented candidates.

Obviously, further tests are required to further establish the possibilities and usefulness of this technique within the domain of natural history collections. Also, acceptance of automated identifications will require review and discussion of the model’s performance, especially its prediction certainty and the effect it has on number misidentifications (false positives) among automatically identified specimens. However, based on the results, the collection managers that participated in the pilot project were enthusiastic, and we are now exploring the next steps.